Thanks for the reply!

I’m using GStreamer 1.26.6 customly built.

Interestingly, gst-play works and is able to select all the streams. I have no video though (a bit of misconfiguration, GStreamer is unable to configure video sink). This made me to try another approach and …

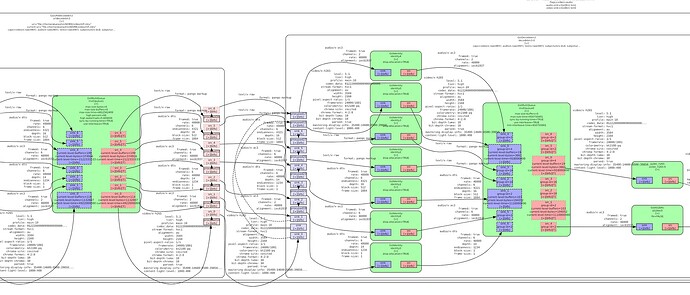

When i only extract audio track and send it to WebRTC i have all the streams accessible!

And this is what happens internally:

I’m starting to suspect that the issue is with video track starting many seconds before audio, thus filling and blocking some queues while having others empty.

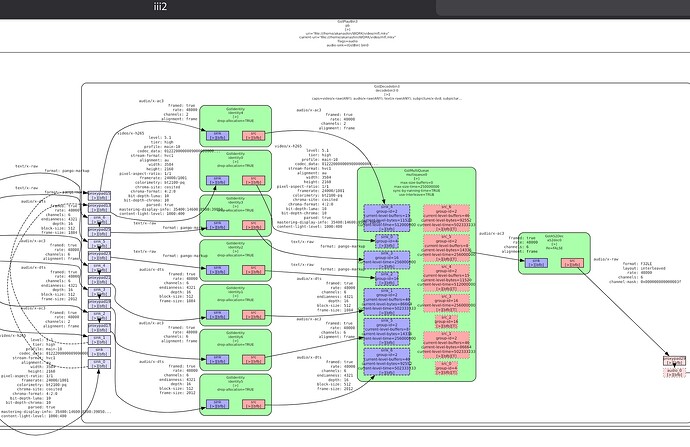

My pipeline looks like this (it is generated, not manually created):

playbin3 name=pb uri=file:///home/akanashin/WORK/video/mfl.mkv

capsfilter name=stream_1_v1 caps=video/x-raw

capsfilter name=stream_1_a1 caps=audio/x-raw

webrtcbin name=webrtcbin stun-server=stun://stun.l.google.com:19302 bundle-policy=max-bundle

stream_1_v1.

! queue ! videoconvertscale ! videorate ! video/x-raw,format=I420,format=I420,width=3584,height=2160,framerate=24000/1001

! x264enc bitrate=3000 speed-preset=ultrafast tune=zerolatency key-int-max=15

! rtph264pay mtu=1000 config-interval=-1 aggregate-mode=zero-latency pt=96

! application/x-rtp,a-mid=“f_v_v1”

! webrtcbin.

stream_1_a1.

! queue ! audioconvert ! audioresample ! audio/x-raw,format=S16LE,rate=48000,channels=2,layout=interleaved

! opusenc perfect-timestamp=true

! rtpopuspay mtu=1000 pt=97

! application/x-rtp,a-mid=“f_a_a1”

! webrtcbin.

This pipeline just never actually starts after all required negotiations. Handling only video or only audio makes it work.